Singularity

Scientists See Self-Replicating Nano Robots As A Coming Reality

Many computer scientists think the age of the self-replicating, evolving machine may be upon us.

“The fool hath said in his heart, There is no God.” Psalm 14:1

Many computer scientists think the age of the self-replicating, evolving machine may be upon us.

It is an idea that has been around for a while – in fiction. Stanislaw Lem in his 1964 novel The Invincible told the story of a spaceship landing on a distant planet to find a mechanical life form, the product of millions of years of mechanical evolution. It was an idea that would resurface many decades later in the Matrix trilogy of movies, as well as in software labs.

In fact, self-replicating machines have a much longer, and more nuanced, past. They were indirectly proposed in 1802, when William Paley formulated the first teleological argument of machines producing other machines.

In his book Natural Theology, Paley proposed the famous “watchmaker analogy”. He argued that something as complex as a watch could only exist if there was a watchmaker. Since the universe and all living beings were far more complex than a watch, there had to be a God – a divine watchmaker. Interestingly, Paley conceded that his argument would be moot if the watch could make itself. This detail has been forgotten during the cultural wars that followed Darwin’s publication of On the Origin of Species.

Self-replicating machines have been around, at least in theory, for decades. In 1949, the mathematician John von Neumann showed how a machine could replicate itself. He called it the “universal constructor” because the machine was both an active component of the construction and the target of the copying process.

This means that the medium of replication is, at the same time, the medium of storage of the instructions for the replication. Von Neumann’s big idea allowed open-ended complexity, and therefore errors in the replication – in other words, it opened up self-replicating non-biological systems to the laws of evolution. His brilliant insight predated the discovery of the DNA double helix by Crick and Watson. He went on to develop mathematical entities that reproduced themselves and evolved over time, which he called “cellular automata”.

Although von Neumann’s model initially worked only in mathematical space, it was a clear demonstration that evolution may influence mechanical evolution. Since then, engineers have taken the principle on board and have produced physical applications such as RepRap machines – 3D printers that can print most of their own components.

The next logical step would be to apply these principles in robot reproduction. For instance, we could have a robotic factory with three classes of robots: one for mining and transporting raw material, one for assembling raw materials into finished robots and one for designing processes and products. The last class, the “brains” of the autonomous robotic factory, would be artificial intelligence systems. But could these robots also “evolve”?

The Victorian novelist Samuel Butler thought so. He was a contemporary of Charles Darwin, who spent 20 years of his life attacking the foundations of Darwinism. Butler was not so much against the idea of evolution per se. His tiff with Darwin revolved around the role of intelligence. For Butler the intelligence of evolution and the evolution of intelligence showed common principles, of which life was at the same time both the cause and the result. On this basis, he concluded “it was the race of the intelligent machines and not the race of men which would be the next step in evolution”.

In his novel Erewhon (an anagram of “nowhere”) he describes a utopian society that opted to banish machines. They were deemed to be dangerous, a notion that has influenced fiction, and non-fiction, to our day. The economist Tyler Cowen, in his recent book Average is Over, warns that thinking machines will take our jobs.

Safety legislation impedes, although it does not preclude, the development of a fully autonomous robotic factory that reproduces itself. But planting such a factory on a distant planet is a different story. Mars colonisation could benefit from self-reproducing robots preparing the planet for human habitation. The physicist and visionary George Dyson has proposed using self-replicating robots to cut and ferry water-ice from Enceladus (a frozen moon of Saturn) to Mars and use it to terraform the Red Planet.

Some biologists believe that life on Earth started on Mars, the seeds of our biosphere carried here by meteorites blasted off the Martian surface billions of years ago. If that is true, it would then be an irony of apocalyptic proportions if future intelligent machines from Mars rebelled against their originators, attacked Earth in order to colonise it and got rid of the current inhabitants. Unlike HG Wells’s fictional invaders, these robotic Martians of the future would be impervious to biological germs. (But perhaps not to computer viruses.)

Ultimately, the question whether self-reproducing robots will evolve or not boils down to the capability of artificial intelligence systems to self-improve. Only then could the “brains” of the robotic factory build evolved robots without the need of human designers.

It’s already happening. Machine learning has been around for years. New algorithms for data analysis, combined with increasing computer power and interconnectedness, means that intelligent machines will be able to comprehend massive amounts of contextual information. They would not only be able to understand what a piece of information is about, but how it relates to other information. The capability to understand correlations and get “the big picture” could potentially enable them to set their own goals. Already there are autonomous robotic systems that do that, military drones being an example. Self-improvement could be next.

Perhaps by exploring and learning about human evolution, intelligent machines will come to the conclusion that sex is the best way for them to evolve. Rather than self-replicating, like amoebas, they may opt to simulate sexual reproduction with two, or indeed innumerable, sexes.

Sex would defend them from computer viruses (just as biological sex may have evolved to defend organisms from parasitical attack), make them more robust and accelerate their evolution. Software engineers already use so-called “genetic algorithms” that mimic evolution.

Nanotechnologists, like Eric Drexler, see the future of intelligent machines at the level of molecules: tiny robots that evolve and – like in Lem’s novel – come together to form intelligent superorganisms. Perhaps the future of artificial intelligence will be both silicon- and carbon-based: digital brains directing complex molecular structures to copulate at the nanometre level and reproduce. Perhaps the cyborgs of the future may involve human participation in robot sexual reproduction, and the creation of new, hybrid species.

If that is the future, then we may have to reread Paley’s Natural Theology and take notice. Not in the way that creationists do, but as members of an open society that must face up to the possible ramifications of our technology. Unlike natural evolution, where high-level consciousness and intelligence evolved late as by-products of cerebral development in mammals, in robotic evolution intelligence will be the guiding force. Butler will be vindicated. Brains will come before bodies. Robotic evolution will be Intelligent Design par excellence. The question is not whether it may happen or not, but whether we would want it to happen. source – Telegraph UK

Days Of Lot

Netflix Launching Dating Reality Show Called ‘Sexy Beasts’ Where Single Contestants Use Prosthetics To Resemble Demonic Transhumanistic Freaks

‘Sexy Beasts’ is a reality dating show from Netflix in which singletons go on dates to meet their soulmate, but as transhumanistic freaks.

Jesus warns in Matthew 24 that the end times will be a whole lot like the Days of Noah, so that means that transhumanism will play a big role. Netflix and ‘Sexy Beasts’ are much more timely than they think they are, wouldn’t you say?

I understand the concept behind a ‘blind date’, that causes you to focus on something other than whether or not a person is good looking, because looks aren’t everything, right? Right. But the new Netflix series ‘Sexy Beasts’ goes waaaaaaaay past merely disguising the superficial, and winds up smack dab in the end times bible prophecy zone. For real. Better buckle up for this one, ‘Sexy Beasts’ is one Hell of a ride.

“There were giants in the earth in those days; and also after that, when the sons of God came in unto the daughters of men, and they bare children to them, the same became mighty men which were of old, men of renown.” Genesis 6:4 (KJB)

Dating show ‘Sexy Beasts’ makes a great segue into talking, once again, about how the whole world is seeming to shift right back to Genesis 6 in the Days of Noah. Fallen angels having sex with human women who then give birth to a race of hybrid, transhumanism giants is what the Days of Noah is really all about. Jesus warns in Matthew 24 that the end times will be a whole lot like the Days of Noah, so that means that transhumanism will play a big role. Netflix and ‘Sexy Beasts’ are much more timely than they think they are, wouldn’t you say?

Right now would be a great time to order your copy of ‘Genesis 6 Giants’ to see all the stuff that Netflix leaves out.

‘Sexy Beasts’ trailer reveals new Netflix dating show

FROM CNET: I could try to explain Netflix’s dating series Sexy Beasts, but it’s probably better if you just watch the trailer, OK?

Sexy Beasts is a reality show in which singletons go on dates to meet their soulmate. But to make sure they’re focusing on personality rather than looks, the twist is that both lovelorn singles are decked out in high-quality prosthetics to look like colorful animals, aliens and, um, possibly sea creatures?

Sexy Beasts began life as a British series on the BBC in 2014, and the Masked Singer-style format is getting a new lease on life on streaming service Netflix. It depicts disguised dates taking place in the UK and US, filmed late last year during the COVID-19 pandemic. READ MORE

Sexy Beasts | Official Trailer | Netflix

Now The End Begins is your front line defense against the rising tide of darkness in the last Days before the Rapture of the Church

- HOW TO DONATE: Click here to view our WayGiver Funding page

When you contribute to this fundraising effort, you are helping us to do what the Lord called us to do. The money you send in goes primarily to the overall daily operations of this site. When people ask for Bibles, we send them out at no charge. When people write in and say how much they would like gospel tracts but cannot afford them, we send them a box at no cost to them for either the tracts or the shipping, no matter where they are in the world. Even all the way to South Africa. We even restarted our weekly radio Bible study on Sunday nights again, thanks to your generous donations. All this is possible because YOU pray for us, YOU support us, and YOU give so we can continue growing.

But whatever you do, don’t do nothing. Time is short and we need your help right now. If every one of the 15,860+ people on our daily mailing list gave $4.50, we would reach our goal immediately. If every one of our 150,000+ followers on Facebook gave $1.00 each, we would reach 300% of our goal. The same goes for our 15,900 followers on Twitter. But sadly, many will not give, so we need the ones who can and who will give to be generous. As generous as possible.

“Looking for that blessed hope, and the glorious appearing of the great God and our Saviour Jesus Christ;” Titus 2:13 (KJV)

“Thank you very much!” – Geoffrey, editor-in-chief, NTEB

- HOW TO DONATE: Click here to view our WayGiver Funding page

End Times

Shocking New Study Shows That When Humans Are Touched By A Humanoid Robot It Makes Them More Like To Listen And Follow Their Orders

Researchers in Germany say the touch of a humanoid robot makes people happier and more likely to follow their requests as cyborgs.

Researchers in Germany say the touch of a humanoid robot makes people happier and more likely to follow their requests.

We live in a world where we have been groomed to think of algorithms and chunks of plastic and metals on the same level of human beings, don’t believe me? Then why do you say ‘thank you’ to Alexa after she completes a task, why do you joke with Siri, and all of the hundreds of ways we speak to our machines? Because the Mark of the Beast System has trained you to do that.

“And deceiveth them that dwell on the earth by the means of those miracles which he had power to do in the sight of the beast; saying to them that dwell on the earth, that they should make an image to the beast, which had the wound by a sword, and did live. And he had power to give life unto the image of the beast, that the image of the beast should both speak, and cause that as many as would not worship the image of the beast should be killed.” Revelation 13:14,15 (KJB)

Blow-up sex dolls have been the fodder of stand-up comics for years, but there is nothing funny about what’s happening now with ultra life-like humanoid robots that humans are not only having sex with, but falling in love with emotionally. That is overtly demonic and all part of the coming dispensation in which Antichrist will rule over this fallen world. Don’t talk to your devices as if they were real, and don’t have anything to do with the coming VR and AR fake reality the tech gods seek to enslave us with.

We have consistently told you that the drive to full human microchip implantation comes in three stages, which are as follows:

- STAGE ONE: Getting the whole world in front of a computer. This was successfully accomplished in 1994 with the twin whammy of the release of the Internet for public use, and the stratospheric rise of Windows software in personal computing systems. This stage lasted from 1994 to 2007, when Apple was the first company to market with a successful mobile device, or smartphone as they are commonly called.

- STAGE TWO: Getting the computer on you. Now that the whole world was attached to personal computers and laptops, Stage Two is where the computer went from being in front of you to being on you. Stop anyone one the street and ask them to you show their mobile device, and you will see it is somewhere on their body. The stage began as previously stated in 2007 with the iPhone from Apple, and is currently coming to an end here now in 2019/2020. Stage Three is the third and final stage in this process.

- STAGE THREE: Getting the computer inside you. Augmented reality smart glasses, in all their varied mutations, are the bridge to human implantable microchips and computer processors. When this stage is fully realized, wearers of the smart glasses will be able to have full 3D interactions in the air as computers screens as we know them today will cease to exist. The technology that will be developed during this stage, 2020 – 2026/28, will include implantable devices that will supplement the smart glasses. This is where Augmented Reality and human implantable will merge .

All of this is the precursor to the coming One World System under Antichrist, and his Mark of the Beast. Now you can see that when the Mark is rolled out, it will not be some new thing that boggles people’s minds, no, just the opposite. When the Mark of the Beast comes along after the Pretribulation Rapture of the Church, the Mark will simply be the ‘latest and greatest’ piece of technology to a world that has already been using Augmented Reality systems and gotten used to seeing things in 3D.

Being touched by a humanoid robot makes people happier, more likely to listen to machines

FROM STUDY FINDS: Instead of being reliant on other humans, researchers are hoping that one day robots may be able to fulfill the roles of therapists, personal trainers, and even life coaches. Their study follows the widespread increase of touch starvation during the COVID-19 pandemic. Several studies have pointed out how physical distancing and isolation is creating psychological complications over the last year. Does a reassuring pat on the back bring you comfort during a tough day? A new study finds, when it comes to touching, people aren’t even picky about who’s doing the touching.

People who go a long time without touch, also called “skin hunger” or “affection deprivation,” may experience a variety of negative physiological effects that increase feelings of stress, depression, and anxiety. Some studies even find that these people may even have an increased likelihood of infections due to immune system changes.

Study authors note scientists continue to explore the effects of physical contact with robots. However, while some studies detect meaningful effects, others find no benefit to a robotic hug.

A motivational robot?

In this research, 48 students engaged in a counselling conversation with the humanoid robot NAO – a programmable research robot often used for education and research purposes. During the course of the conversation, for some participants, the robot briefly and seemingly spontaneously patted the back of the participant’s hand. This differed from the design of other studies, which have relied on human-initiated touch. In response to the robot’s touch, most participants smiled and laughed, and none pulled away. Results show those who were touched were more likely to go along with the robot urging them to show interest in a particular academic course discussed during the conversation.

Participants also reported a better emotional state after the robot’s pat on the hand. Researchers note those who the robot did not touch still rated the conversation just as favorably. The hope is that scientists can harness the impact on request compliance so robots can engage in motivational jobs, such as persuading someone to exercise more.

“A robot’s non-functional touch matters to humans,” Laura Hoffmann from Ruhr University and her team write in a media release. “Slightly tapping human participants’ hands during a conversation resulted in better feelings and more compliance to the request of a humanoid robot.”

The findings were published in the journal PLOS One. READ MORE

Now The End Begins is your front line defense against the rising tide of darkness in the last Days before the Rapture of the Church

- HOW TO DONATE: Click here to view our WayGiver Funding page

When you contribute to this fundraising effort, you are helping us to do what the Lord called us to do. The money you send in goes primarily to the overall daily operations of this site. When people ask for Bibles, we send them out at no charge. When people write in and say how much they would like gospel tracts but cannot afford them, we send them a box at no cost to them for either the tracts or the shipping, no matter where they are in the world. Even all the way to South Africa. We even restarted our weekly radio Bible study on Sunday nights again, thanks to your generous donations. All this is possible because YOU pray for us, YOU support us, and YOU give so we can continue growing.

But whatever you do, don’t do nothing. Time is short and we need your help right now. If every one of the 15,860+ people on our daily mailing list gave $4.50, we would reach our goal immediately. If every one of our 150,000+ followers on Facebook gave $1.00 each, we would reach 300% of our goal. The same goes for our 15,900 followers on Twitter. But sadly, many will not give, so we need the ones who can and who will give to be generous. As generous as possible.

“Looking for that blessed hope, and the glorious appearing of the great God and our Saviour Jesus Christ;” Titus 2:13 (KJV)

“Thank you very much!” – Geoffrey, editor-in-chief, NTEB

- HOW TO DONATE: Click here to view our WayGiver Funding page

End Times

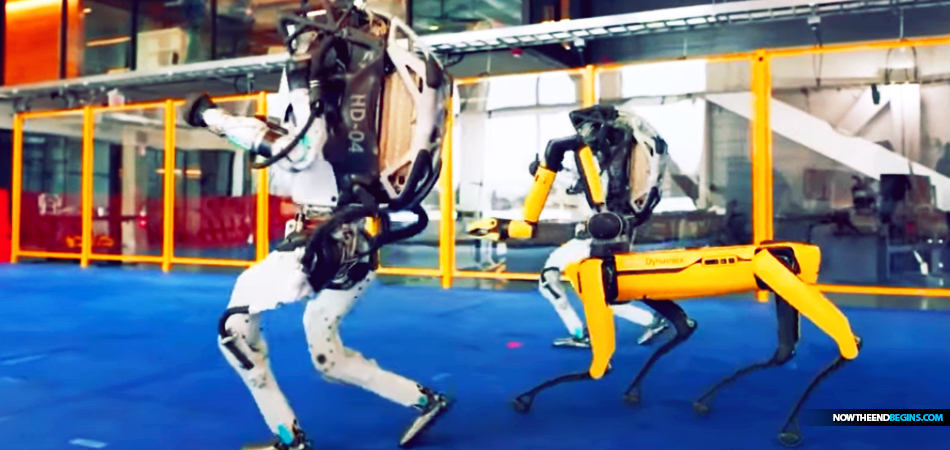

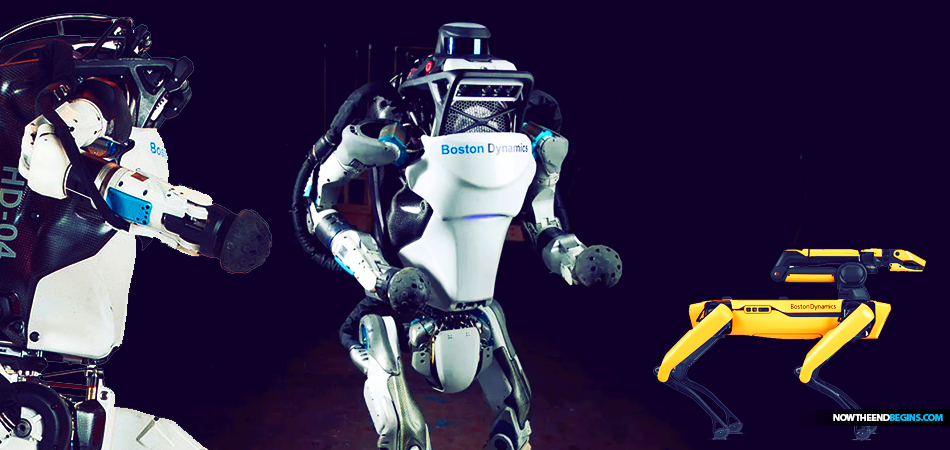

Boston Dynamics Finally Progresses To Point Where Their Robots Now Move And Act Like The Ones In Those Terrifying Dystopian Future Movies

Boston Dynamics is a cutting-edge robotics company that’s spent decades behind closed doors making robots that move in ways we’ve only seen in science fiction films.

Boston Dynamics is a cutting-edge robotics company that’s spent decades behind closed doors making robots that move in ways we’ve only seen in science fiction films.

In the early 200’s, there was a whole slew of dystopian robot movies like ‘I, Robot’, ‘The Matrix’, ‘Surrogates’, ‘A.I Artificial Intelligence’ and so many others. They were thrilling and terrifying at the same time, yet the realization of that technology was far, far into the future, right? Wrong. The vast majority of the things predicted in those movies have either already come to pass or in the process of. At Boston Dynamics, the future is yesterday.

“And he doeth great wonders, so that he maketh fire come down from heaven on the earth in the sight of men, And deceiveth them that dwell on the earth by the means of those miracles which he had power to do in the sight of the beast; saying to them that dwell on the earth, that they should make an image to the beast, which had the wound by a sword, and did live. And he had power to give life unto the image of the beast, that the image of the beast should both speak, and cause that as many as would not worship the image of the beast should be killed.” Revelation 13:13-15 (KJB)

In the early ‘blog days’ of NTEB, we did articles on robot technology, and it was amazing the level they had got it to by 2011, 2016, but here in 2021 these robots move with human-like mobility, with refined, complex movements and gestures in a way that is most unsettling when you first see it. When you add the AI components of Virtual and Augmented Reality to that, you see very quickly where all this is headed. But don’t worry, the folks at Boston Dynamics assure us that, even though they look just like the robots in those scary movies, they could never actually revolt and take over. Whew! What a relief. So glad they said that could never happen. (rolling my eyes).

Boston Dynamics: Inside the workshop where robots of the future are being built

FROM CBS NEWS: They occasionally release videos on YouTube of their life-like machines spinning, somersaulting or sprinting, which are greeted with fascination and fear. We’ve been trying, without any luck, to get into Boston Dynamics’ workshop for years, and a few weeks ago they finally agreed to let us in. After working out strict COVID protocols, we went to Massachusetts to see how they make robots do the unimaginable.

From the outside, Boston Dynamics headquarters looks pretty normal. Inside, however. it’s anything but. If Willy Wonka made robots, his workshop might look something like this. There are robots in corridors, offices and kennels. They trot and dance and whirl and the 200-or-so human roboticists, who build and often break them, barely bat an eye.

That is Atlas, the most human-looking robot they’ve ever made.

It’s nearly 5 feet tall, 175 pounds, and is programmed to run, leap and spin like an automated acrobat. Marc Raibert, the founder and chairman of Boston Dynamics doesn’t like to play favorites, but definitely has a soft spot for Atlas.

Marc Raibert: So here’s a little bit of a jump.

Anderson Cooper: I mean, that’s incredible. (LAUGH)

Atlas isn’t doing all this on its own. Technician Bryan Hollingsworth is steering it with this remote control. But the robot’s software allows it to make other key decisions autonomously.

Marc Raibert: So really the robot is

Anderson Cooper: That’s incredible–

Marc Raibert: You know, doing all its own balance, all its own control. Bryan’s just steering it, telling it what speed and direction. Its computers are– adjusting how the legs are placed and what forces it’s applying–

Marc Raibert: In order to keep it– balanced.

Atlas balances with the help of sensors, as well as a gyroscope and three on-board computers. It was definitely built to be pushed around.

Marc Raibert: Good, push it a little bit more. It’s just trying to keep its balance. Just like you will, if I push you. And you can push it in any direction, you can push it from the side. (LAUGH)

Making machines that can stay upright on their own and move through the world with the ease of an animal or human has been an obsession of Marc Raiberts’ for 40 years.

Anderson Cooper: The space of time you’ve been working in is nothing compared to the time it’s taken for animals and humans to develop.

Marc Raibert: Some people look at me and say, “Oh, Raibert, you’ve been stuck on this problem for 40 years.” Animals are amazingly good, and people, at– at what they do. You know, we’re so agile. We’re so versatile. We really haven’t achieved what humans can do yet. But I think– I think we can.

Raibert isn’t making it easy for himself, he’s given most of his robots legs.

Anderson Cooper: Why focus on, on legs? I would think wheels would be easier.

Marc Raibert: Yeah, wheels and tracks are great if you have a prepared surface like a road or even a dirt road. But people and animals can go anywhere on earth– using their legs. And, so, that, you know, that was the inspiration.

Some of the first contraptions he built in the early 1980s bounced around on what looked like pogo sticks. They appeared in this documentary when Raibert was a pioneering professor of robotics and computer science at Carnegie Mellon. He founded Boston Dynamics in 1992, and with CEO Robert Playter has been working for decades to perfect how robots move. READ MORE

Robots of the future at Boston Dynamics

Now The End Begins is your front line defense against the rising tide of darkness in the last Days before the Rapture of the Church

- HOW TO DONATE: Click here to view our WayGiver Funding page

When you contribute to this fundraising effort, you are helping us to do what the Lord called us to do. The money you send in goes primarily to the overall daily operations of this site. When people ask for Bibles, we send them out at no charge. When people write in and say how much they would like gospel tracts but cannot afford them, we send them a box at no cost to them for either the tracts or the shipping, no matter where they are in the world. Even all the way to South Africa. We even restarted our weekly radio Bible study on Sunday nights again, thanks to your generous donations. All this is possible because YOU pray for us, YOU support us, and YOU give so we can continue growing.

But whatever you do, don’t do nothing. Time is short and we need your help right now. If every one of the 15,860+ people on our daily mailing list gave $4.50, we would reach our goal immediately. If every one of our 150,000+ followers on Facebook gave $1.00 each, we would reach 300% of our goal. The same goes for our 15,900 followers on Twitter. But sadly, many will not give, so we need the ones who can and who will give to be generous. As generous as possible.

“Looking for that blessed hope, and the glorious appearing of the great God and our Saviour Jesus Christ;” Titus 2:13 (KJV)

“Thank you very much!” – Geoffrey, editor-in-chief, NTEB

- HOW TO DONATE: Click here to view our WayGiver Funding page

-

George Soros9 years ago

Proof Of George Soros Nazi Past Finally Comes To Light With Discovery Of Forgotten Interview

-

Election 20169 years ago

DEAD POOL DIVA: Huma Abedin Kept Those Hillary Emails That The FBI Found In A Folder Marked ‘Life Insurance’

-

Election 20169 years ago

Crooked Hillary Campaign Used A Green Screen At Today’s Low Turnout Rally In Coconut Creek FL

-

George Soros9 years ago

SORE LOSER: George Soros Declares War On America As Violent MoveOn.Org Protests Fill The Streets

-

Donald Trump9 years ago

Donald Trump Will Be 70 Years, 7 Months And 7 Days Old On First Full Day In Office As President

-

Headline News9 years ago

If Hillary Is Not Guilty, Then Why Are Her Supporters Asking Obama To Pardon Her? Hmm…

-

Election 20169 years ago

WikiLeaks Shows George Soros Controlling Vote With 16 States Using SmartMatic Voting Machines

-

End Times9 years ago

False Teacher Beth Moore Endorses The Late Term Partial-Birth Abortion Candidate Crooked Hillary