End Times

Google’s DeepMind Machine Generated-Speech Breakthrough Moves Ever Closer To Human Speech

Google’s DeepMind unit, which is working to develop super-intelligent computers, has created a system for machine-generated speech that it says outperforms existing technology by 50 percent.

Google’s DeepMind unit, which is working to develop super-intelligent computers, has created a system for machine-generated speech that it says outperforms existing technology by 50 percent.

“But thou, O Daniel, shut up the words, and seal the book,even to the time of the end: many shall run to and fro, and knowledge shall be increased.” Daniel 12:4 (KJV)

U.K.-based DeepMind, which Google acquired for about 400 million pounds ($533 million) in 2014, developed an artificial intelligence called WaveNet that can mimic human speech by learning how to form the individual sound waves a human voice creates, it said in a blog post Friday. In blind tests for U.S. English and Mandarin Chinese, human listeners found WaveNet-generated speech sounded more natural than that created with any of Google’s existing text-to-speech programs, which are based on different technologies. WaveNet still underperformed recordings of actual human speech.

Many computer-generated speech programs work by using a large data set of short recordings of a single human speaker and then combining these speech fragments to form new words. The result is intelligible and sounds human, if not completely natural. The drawback is that the sound of the voice cannot be easily modified. Other systems form the voice completely electronically, usually based on rules about how the certain letter-combinations are pronounced. These systems allow the sound of the voice to be manipulated easily, but they have tended to sound less natural than computer-generated speech based on recordings of human speakers, DeepMind said.

WaveNet is a type of AI called a neural network that is designed to mimic how parts of the human brain function. Such networks need to be trained with large data sets.

‘Challenging Task’

WaveNet won’t have immediate commercial applications because the system requires too much computational power: it has to sample the audio signal it is being trained on 16,000 times per second or more, DeepMind said. And then for each of those samples it has to form a prediction about what the soundwave should look like based on each of the prior samples. Even the DeepMind researchers acknowledged in their blog post that this “is a clearly challenging task.” source

End Times

MAD MAX: Los Angeles Erupts Into Dystopian Violence And End Times Chaos After Dodgers Win The World Series Over Yankees In Kamala’s America

Los Angeles descended into chaos Wednesday night as belligerent baseball fans set a bus on fire while others clashed with cops and looters ran amuck following the Dodgers’ World Series victory. This is Kamala’s America.

Last night the LA Dodgers won the World Series, and it should have been cause for good, old-fashioned celebration and cheering amongst their fans, but it wasn’t. Instead, hundreds of angry and violent rioters spread out on the downtown streets of Los Angeles, breaking into stores, looting, rioting, and then setting an MTA passenger bus on fire. The police stood by and watched as it all unfolded, with none attempting to stop the thefts from taking place. This is Kamala Harris’ America, and it is a scary and dangerous place. If she is elected, this is what she will bring with her.

“And there went out another horse that was red: and power was given to him that sat thereon to take peace from the earth, and that they should kill one another: and there was given unto him a great sword.” Revelation 6:4 (KJB)

The America you grew up in is gone, and it’s not coming back. In its place is a bleak and ruinous landscape whose streets are filled with violence, and whose people are waiting for Antichrist. In 2020, when Black Lives Matter and ANTIFA wreaked havoc across our country, murdering dozens and causing $2 billion in damage, Kamala Harris said that those riots were good, were necessary, and that Americans had no right to expect them to stop. In Los Angeles last night, we were given another reminder of what’s coming. If you live in the cities, it’s time to move.

LA erupts into chaos following Dodgers’ World Series victory with looters raiding Nike store, ‘hostile’ mob burning down bus

FROM THE NY POST: The Los Angeles Police Department shared footage of a mob of looters running in and out of a boarded-up Nike store carrying merchandise and throwing it into cars parked outside the store about four miles from Dodger Stadium just after 11 p.m.

The LAPD said they are “aware of the looting” and have made arrests but did not disclose how many suspects were taken into custody. The department also shared that “several businesses” were looted, and multiple properties were “vandalized” during the “violent celebrations” across the city.

Aerial footage from ABC 7 Los Angeles shows dozens of looters calmly walking into and leaving a Nike store with boxes of goods without police interference. As looters ransacked businesses, other areas of downtown Los Angeles were plunged into madness.

This is how they celebrated in Los Angeles last night after the Dodgers won the World Series over the Yankees. Looting, rioting and setting a bus on fire. This is the spirit of Antichrist, get out of the cities while you still can. #DodgersWin pic.twitter.com/95hgdF3V1i

— Now The End Begins (@NowTheEndBegins) October 31, 2024

The LAPD said a “hostile crowd” — estimated to be between 200 and 300 people — lit an MTA bus on fire about a mile away from Dodger Stadium around 12:35 a.m. local time. Footage posted to Citizen captured the moment a massive crowd — the majority of which were wearing Dodgers jerseys and apparel — surrounded and sat atop the graffiti-laced bus.

Members of the crowd were caught on video entering the bus, while others were seen holding up Dodgers flags and taking pictures. Emergency responders quickly arrived on the scene at about 12:45 a.m. local time, monitored the bus fire, and protected nearby structures from potential spread.

WARNING: Kamala Harris in 2020 telling you that the riots that killed two dozen people and caused $2 billion in damage were good, necessary, and that the American people had no right to expect them to stop. This is the America we will get if she becomes president. pic.twitter.com/rjy9fux3g6

— Now The End Begins (@NowTheEndBegins) October 31, 2024

A video posted by @FilmThePoliceLA shows fireworks being set off inside the bus as the crowd shouts in excitement. Another showed the bus fully engulfed in flames and spewing out thick black smoke while police in riot gear set up a parameter to keep back the crowd.

“Let’s go Dodgers!” the crowd shouted over the sounds of helicopters and police sirens.

The bus then appeared to explode and shot flames up into the air. Firefighters were seen extinguishing the charred remains of the bus, the frame of which was the only part of the transport vehicle that appeared to remain intact. The Los Angeles Fire Department said it remains “in a normal operating mode, handling routine emergencies citywide” and has directed the public to the LAPD for information regarding all the “unlawful gatherings.”

The LAPD also reported that officers trying to disperse the rowdy crowd were being pelted with “rocks,” “bottles,” and “fireworks” from a malicious crowd around where the looting was taking place.

“Extra resources are responding to that area to assist. If you are on the street, at or adjacent to that intersection, leave the area immediately & follow officers orders,” police said. “If you are in the area, please use caution.”

Los Angeles Mayor Karen Bass warned citizens that “violence will not be tolerated” as the city celebrates the Dodgers besting the Yankees during game 5 in New York, according to Fox 11 Los Angeles. READ MORE

Now The End Begins is your front line defense against the rising tide of darkness in the last Days before the Rapture of the Church

- HOW TO DONATE: Click here to view our WayGiver Funding page

When you contribute to this fundraising effort, you are helping us to do what the Lord called us to do. The money you send in goes primarily to the overall daily operations of this site. When people ask for Bibles, we send them out at no charge. When people write in and say how much they would like gospel tracts but cannot afford them, we send them a box at no cost to them for either the tracts or the shipping, no matter where they are in the world. We have a Gospel Billboard program. We are now broadcasting Bible studies, Podcasts and a Sunday Service 5 times a week, thanks to your generous donations. All this is possible because YOU pray for us, YOU support us, and YOU give so we can continue growing.

But whatever you do, don’t do nothing. Time is short and we need your help right now. The Lord has given us an open door with a tremendous ‘course’ for us to fulfill that will create an excellent experience at the Judgement Seat of Christ. Please pray for our efforts, and if the Lord leads you to donate, be as generous as possible. The war is REAL, the battle HOT and the time is SHORT…TO THE FIGHT!!!

“Looking for that blessed hope, and the glorious appearing of the great God and our Saviour Jesus Christ;” Titus 2:13 (KJB)

“Thank you very much!” – Geoffrey, editor-in-chief, NTEB

End Times

We Need Your Help To Put Up An NTEB Gospel Witness Billboard In Sugarland Texas Across From The Massive 90ft Hindu Idol Of The False God Lord Hanuman

Astonishing photographs of a 90-foot statue of Hindu god Lord Hanuman show massive end times idolatry rising up in Sugarland, Texas. Sugarland needs the gospel, let’s give it to them

America is under spiritual attack as never before, and the perfect symbol of that can be seen today in the town of Sugarland, Texas. What you’ll find there is a massive 90ft tall idol of the Hindu god Lord Hanuman, and as you can see from the photo, it looks every bit as demonic as it sounds. Today on our Monday Prophecy News Podcast, the idea was floated to raise up an NTEB Gospel Witness Billboard across the street, to let the people of Sugarland Texas know that there is a God in Heaven, and they are to serve Him and Him alone. Man, that’s a great idea!

“And it shall be, if thou do at all forget the LORD thy God, and walk after other gods, and serve them, and worship them, I testify against you this day that ye shall surely perish.” Deuteronomy 8:19 (KJB)

Started during the Pandemic in 2021, the NTEB Gospel Witness Billboard Program has put up dozens of billboards in multiple states proclaiming that Jesus Christ is the only Saviour. These billboards have been seen tens of millions of times over the past 3 years by millions of drivers. We asked listeners in the chat room today how they felt about raising money for a ‘Lord Hanuman’ billboard, and the response was overwhelmingly in favor of doing that, and donations have already started coming in. Now we turn to you.

- HOW TO DONATE: Click here to view our WayGiver Funding page

If you think a Gospel billboard across from this demonic monstrosity would be a good idea, please visit our WayGiver funding page and make a donation. One month for a vinyl billboard is approximately $1,250, and a full year just under $15,000. We will keep this billboard up for as long as there is funding to support it, hopefully we can keep it up at least until the end of the year. Christian, this is a great way to stand against the gathering end times darkness, while at the same time standing for the Lord Jesus Christ and the gospel of the kingdom of God. If God has prospered you, please join us in this effort. Thank you as always…TO THE FIGHT!!!

The 90ft tall idol of the Hindu god ‘Lord Hunumam’ rises above Sugarland, Texas

FROM NEWSWEEK: The monument, known as the Statue of Union, stands at the Sri Ashtalakshmi Temple in Sugar Land. Lord Hanuman is depicted as human, but with the head and tail of a monkey, as is customary. The statue is holding both hands palm forward and the end of the tail is twisted above the head, creating a halo effect.

Hanuman is a Hindu god known for his power, courage, and selfless service. He is also a symbol of power and celibacy.

The statue’s unveiling on Sunday marks rapidly changing religious demographics in the U.S., with surveys showing a decline in the proportion of Americans who identify as Christian and a rise in those who say they are “nothing in particular” or belong to religions which are followed by a minority in the U.S.

This is a Hindu idol built to worship the god Lord Hanuman, only this is not outside of a temple in India. This 90ft. monstrosity is in Sugarland, Texas. Where are all the Christians??? #LordHanuman pic.twitter.com/0slhDjZrFY

— Now The End Begins (@NowTheEndBegins) August 26, 2024

Speaking to Newsweek Ranganath Kandala, joint secretary of the Ashtalakshmi Temple, confirmed the statue is 90-foot-tall and said it was inaugurated in a Pran Pratishtha ceremony on August 18, celebrating it as a living embodiment of the deity. READ MORE

Now The End Begins is your front line defense against the rising tide of darkness in the last Days before the Rapture of the Church

- HOW TO DONATE: Click here to view our WayGiver Funding page

When you contribute to this fundraising effort, you are helping us to do what the Lord called us to do. The money you send in goes primarily to the overall daily operations of this site. When people ask for Bibles, we send them out at no charge. When people write in and say how much they would like gospel tracts but cannot afford them, we send them a box at no cost to them for either the tracts or the shipping, no matter where they are in the world. We have a Gospel Billboard program. We are now broadcasting Bible studies, Podcasts and a Sunday Service 5 times a week, thanks to your generous donations. All this is possible because YOU pray for us, YOU support us, and YOU give so we can continue growing.

But whatever you do, don’t do nothing. Time is short and we need your help right now. The Lord has given us an open door with a tremendous ‘course’ for us to fulfill that will create an excellent experience at the Judgement Seat of Christ. Please pray for our efforts, and if the Lord leads you to donate, be as generous as possible. The war is REAL, the battle HOT and the time is SHORT…TO THE FIGHT!!!

“Looking for that blessed hope, and the glorious appearing of the great God and our Saviour Jesus Christ;” Titus 2:13 (KJB)

“Thank you very much!” – Geoffrey, editor-in-chief, NTEB

Artificial Intelligence

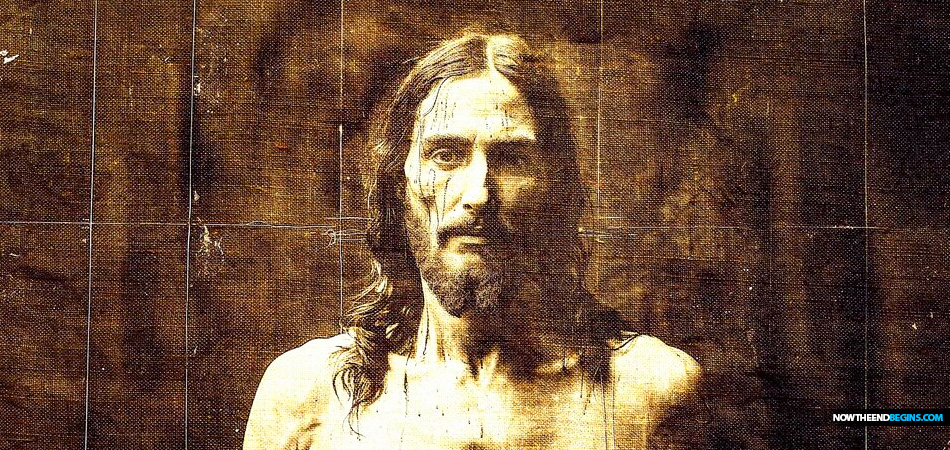

Social Media Explodes Over Claims Saying That AI Reveals The ‘Face Of Jesus’ Using The Shroud Of Turin, But It’s All Part Of The Rising End Times Deception

Artificial intelligence has recreated the ‘face of Jesus Christ’ from the Shroud of Turin that some believe was used to wrap him after his Crucifixion. AI is lying to you.

We live in an age of great deception that is combined with great tools with which to disseminate that deception on a global level never before seen in human history. The Shroud of Turin is an obvious fake based on John 20:7 in your King James Bible, but that doesn’t stop Roman Catholics who have made an idol out of it. Now with AI being applied to the Shroud, images of ‘what Jesus actually looked like!!’ are being published, and it’s all a deception, every bit of it. Deception is the first and greatest sign of the end times according to Jesus.

“And Jesus answered and said unto them, Take heed that no man deceive you. For many shall come in my name, saying, I am Christ; and shall deceive many.” Matthew 24:4,5 (KJB)

In our lukewarm Laodicean end-of-the-Church Age period in which we live, Christians are operating on feelings and emotions, doctrine is out the window exactly as Paul says it will be in 2 Timothy 4:-15. It is the preaching and teaching of Bible doctrine that keeps the Church on the straight and narrow path, remove that (and it has been removed), and you’ve destroyed the foundation on which the Church sits. Back in 2021, we told you about how you just might expect to see people claiming to have found the bones of Jesus, and you just might. You will never find on this Earth the Ark of the Covenant, the ‘holy grail’, the nails that pierced Jesus, or any of the wood from the cross they hung Him on. The Shroud of Turin? An obvious fake if you believe the Bible. Christian, you better climb inside your King James Bible and stay there for the duration, it’s the only safe space there is.

‘Face of Jesus’ unveiled by AI using Shroud of Turin after astonishing discovery

FROM THE DAILY EXPRESS UK: The Shroud of Turin has divided opinion for centuries, with some claiming an outline of Christ’s face can even be seen in the material. Others routinely dismiss it as a forgery but new technology used by Italian scientists suggests that the 14ft linen sheet may indeed date back to the time of Christ. And now, AI has been used to reinterpret the enigmatic holy relic to reveal the “true face of Jesus”. The Daily Express used cutting-edge AI imager Midjourney to create a simulation of the face behind the shroud.

The images appear to show Christ with long flowing hair and a beard – much like many classical depictions of him. There appears to be cuts and grazes around his face and body, pointing to the fact he had just been killed.

While sceptics believe an unknown 14th-Century artist faked the “shroud of the Messiah” using powdered paint on either a sculpture or the body of a model, many Catholics are convinced that the bolt of cloth was somehow imprinted with Christ’s image at the moment of resurrection.

In the 1980s, radiocarbon analysis determined that the cloth used to create the shroud dated from the mid-1300s, shortly before its documented history began.

But Dr. Liberato de Caro from Italy’s Institute of Crystallography, using a new method known as Wide-Angle X-ray Scattering, has sensationally claimed that the fabric is a good match for a similar sample that is confirmed to have come from the siege of Masada, Israel, in 55-74 AD. Dr de Caro has cast doubt on the accuracy of carbon dating. He wrote: “Moulds and bacteria, colonising textile fibres, and dirt or carbon-containing minerals, such as limestone, adhering to them in the empty spaces between the fibres that at a microscopic level represent about 50% of the volume, can be so difficult to completely eliminate in the sample cleaning phase, which can distort the dating.”

He added that, because the X-ray scattering technique is non-destructive, the same sample could be tested by labs around the world, helping to confirm his findings.

In additional support for his claims, Dr de Caro pointed out that tiny particles of pollen from the Middle East had been lodged between the fibres of the linen, ruling out the common belief that the shroud is a European forgery. While there is no hard evidence of the Shroud existing before the mid-1300s, a similar relic – which supporters believe was the same object – was reportedly stolen from a church in Constantinople a century before.

It bears the ghostly image of a man around six feet in height who bears wounds consistent with whipping and crucifixion. With the invention of photography at the end of the 19th Century, the shroud was photographed – revealing that the negative image was much more vivid than the faded “scorch mark” visible to the naked eye.

Over the years, a number of sceptics have attempted to recreate the centuries-old image, with mixed results. While the balance of probabilities lies with the object having been produced by an unidentified faker in the mid-1300s, whoever created it would have had remarkable, almost supernatural skill. READ MORE

Now The End Begins is your front line defense against the rising tide of darkness in the last Days before the Rapture of the Church

- HOW TO DONATE: Click here to view our WayGiver Funding page

When you contribute to this fundraising effort, you are helping us to do what the Lord called us to do. The money you send in goes primarily to the overall daily operations of this site. When people ask for Bibles, we send them out at no charge. When people write in and say how much they would like gospel tracts but cannot afford them, we send them a box at no cost to them for either the tracts or the shipping, no matter where they are in the world. We have a Gospel Billboard program. We are now broadcasting Bible studies, Podcasts and a Sunday Service 5 times a week, thanks to your generous donations. All this is possible because YOU pray for us, YOU support us, and YOU give so we can continue growing.

But whatever you do, don’t do nothing. Time is short and we need your help right now. The Lord has given us an open door with a tremendous ‘course’ for us to fulfill that will create an excellent experience at the Judgement Seat of Christ. Please pray for our efforts, and if the Lord leads you to donate, be as generous as possible. The war is REAL, the battle HOT and the time is SHORT…TO THE FIGHT!!!

“Looking for that blessed hope, and the glorious appearing of the great God and our Saviour Jesus Christ;” Titus 2:13 (KJB)

“Thank you very much!” – Geoffrey, editor-in-chief, NTEB

-

George Soros9 years ago

George Soros9 years agoProof Of George Soros Nazi Past Finally Comes To Light With Discovery Of Forgotten Interview

-

Election 20169 years ago

Election 20169 years agoDEAD POOL DIVA: Huma Abedin Kept Those Hillary Emails That The FBI Found In A Folder Marked ‘Life Insurance’

-

Election 20169 years ago

Election 20169 years agoCrooked Hillary Campaign Used A Green Screen At Today’s Low Turnout Rally In Coconut Creek FL

-

George Soros9 years ago

George Soros9 years agoSORE LOSER: George Soros Declares War On America As Violent MoveOn.Org Protests Fill The Streets

-

Donald Trump9 years ago

Donald Trump9 years agoDonald Trump Will Be 70 Years, 7 Months And 7 Days Old On First Full Day In Office As President

-

Headline News9 years ago

Headline News9 years agoIf Hillary Is Not Guilty, Then Why Are Her Supporters Asking Obama To Pardon Her? Hmm…

-

Election 20169 years ago

Election 20169 years agoWikiLeaks Shows George Soros Controlling Vote With 16 States Using SmartMatic Voting Machines

-

End Times9 years ago

End Times9 years agoFalse Teacher Beth Moore Endorses The Late Term Partial-Birth Abortion Candidate Crooked Hillary